In the UK we are used to having a reliable and stable electricity grid. So stable that you can keep time with it.

Before quartz clocks became common, mains powered clocks used the electricity grid frequency of 50Hz as their time reference, you can still find the odd central heating timer or old alarm clock that still uses it.

(Image credit: Wikimedia)

Frequency Response

Here is a quick overview on how it is done, but just to note, I’m not an expert. If you want to know more there is plenty to read on the National Grid ESO website.

To keep the 50Hz frequency stable, the National Grid are continuously adding and removing generating capacity in response to the varying load, this is called Frequency Response.

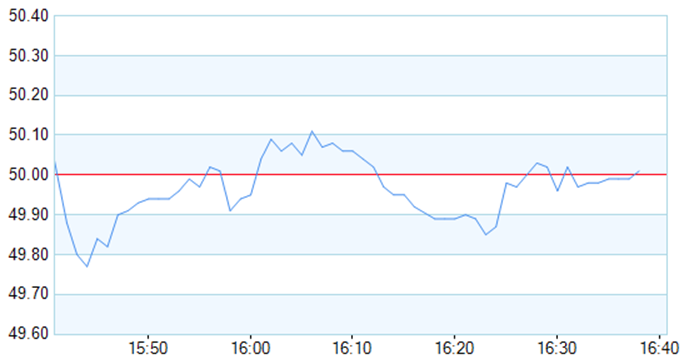

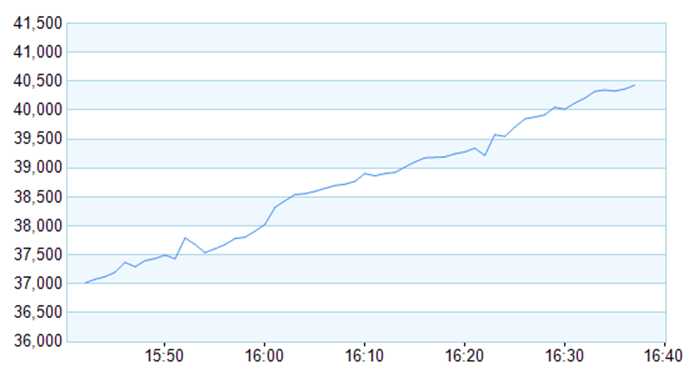

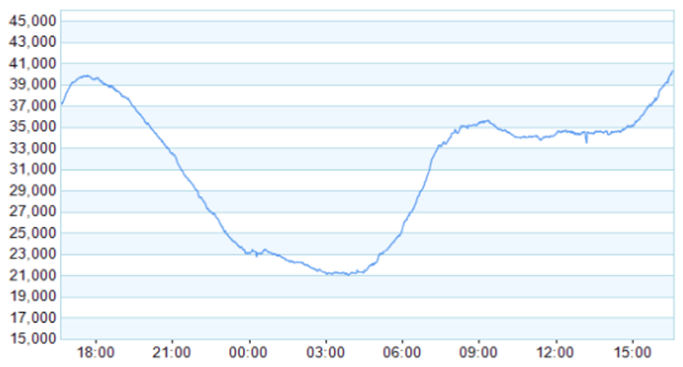

The graphs below show the grid frequency and demand for the last hour, and the demand over the last 24 hours, ref: https://extranet.nationalgrid.com/RealTime

Figure 1: Frequency over 1 hour

Figure 2: Grid demand over 1 hour

Figure 3: Grid demand over 24 hours

As can be seen the demand can change by 100’s of MW in a very short time and in today’s numbers over 18GW between 6pm and 3am.

The current state

In years gone by, the majority of the power generated was from large steam turbines at coal or nuclear power stations. All these turbines and generators provided massive stability and momentum from 1,000’s and 1,000’s of tons of spinning metal. It was a non-competitive world and there was plenty of capacity in reserve for the odd bump or problem.

Enter the modern world, where an increasing percentage of our electricity comes from renewables, without all that rotating mass and momentum to absorb any faults or sudden changes in load.

In order to keep the grid stable, the National Grid contracts capacity that can be rapidly turned on or off to balance the varying usage, spikes in demand or faults. This frequency response reserve capacity is split into three categories, each level has limited reserve, typically, the bigger, the slower:

- Primary: Response < 1 second, sustainable for 20 seconds

- Secondary: Response < 10 second, sustainable for 30 minutes

- Fast: Response < 10 second, sustainable indefinitely

The contracts for this capacity are auctioned, with bidders offering a wide range of solutions to the problem, this can be from a large power station to aggregating supermarket freezers and turning them all off for a few seconds when demand peaks.

Many hands make the lights work

It seems strange that turning off freezers or leaning on household Tesla batteries would make much difference. When many hundreds or thousands of small generators or supermarket freezers are aggregated together it adds up to quite a large amount of load that can be turned off within a few seconds, freezers that have a big thermal mass do not care about being off for a few minutes, or batteries that can provide or absorb power as needed.

This is the basis of “Demand Side Response” that can be used in smart grids to help balance the load and stabilise the frequency.

Faulty towers

In the event of there being a fault on the grid, generators connecting to the grid are required to comply with strict rules and limits, these codes dictate such things as why and when a generator should disconnect from the grid and when it should reconnect.

For example, a generator should disconnect if the voltage or frequency falls below, or goes above certain limits, others include earth faults or over current. Usually the generator would automatically re-connect as soon as the cause of the fault is removed.

The day the Earth stood still (OK, just a bit of the UK)

On August 9th 2019, something of a perfect storm happened, a lightning strike on part of the national electricity transmission system caused a fault and some small generators to disconnect. Near-simultaneously, two large generators experienced technical issues and were unable to continue providing power.

As a result, the grid frequency fell rapidly, this in turn caused multiple generators to disconnect as a result of the under-frequency fault.

The combined losses of generation went beyond the reserve capacity available; this triggered what is called “demand disconnection”. A pre-planned disconnection by the electricity distributors of large areas allowing the grid demand to be rapidly reduced and stability restored. This then allows the generating capacity to recover and the controlled re-connection of supplies.

The investigation found that many generators had disconnected too soon and not all the reserve capacity that had been contracted was available. Power was restored in 45 minutes, however the ongoing disruption from isolated trains etc. lasted most of the day.

The smart gridlock

There are many, many smart devices (think small generators, batteries, building management systems, etc.) . From years of research we know that smart and secure are often mutually exclusive.

So, we all know that it’s not a great leap to imagine a scenario where an attacker could take control.

Also consider the effectiveness of large aggregations of relatively small electrical loads or generators can have on maintaining grid stability.

Now what would happen if the tables were turned and these aggregated assets were used to deliberately destabilise the grid?

Attack scenarios

Method 1: Deplete primary frequency response on under frequency, if primary response could be sufficiently depleted, subsequent low frequency events could cause other generators to trip and cause a cascade effect:

- Turn all generators off and all load on

- Wait at least 5 seconds then turn all the generators back on and all the load off again, would need to be complete before 10 seconds

- Wait ~ 10 seconds and repeat

Method 2: Monitor grid frequency, attempt to create frequency instability:

- Turn all generators off and all load on, for long enough so the secondary reserve responds

- Hopefully there would be a small over frequency swing

- Restore generators, turn load off

- Hopefully there would be a bigger over frequency swing

- Turn all generators off and all load on

- Whilst monitoring grid response adjust timing and attempt to amplify / find resonance in the response

Method 3: Long term mayhem, target operators, manufacturers and smart grid market:

- Randomly turn generators and load off in an attempt to simulate reliability issues

- Start small, get a reputation of unreliability

- Ramp up, especially outside normal working hours

Targeting multiple small installations from multiple manufacturers, and ideally from multiple attack locations, would be very difficult to trace and mitigate.