As Strava are getting a lot of heat as a result of publishing user activity data, we thought it timely to review a serious security flaw in Runtastic that we found a while back. It’s been fixed now, but took the vendor nearly a year to do so.

The flaw

When tracking a live run in real time, privacy settings were not correctly applied to the activity map. This meant that one could simply iterate sequentially through live sessions and track Runtastic users in real time, even though they had selected the option to prevent this. We tracked each other out on runs many times, even though we had set the relevant privacy setting.

It would be trivial to stalk a runner in real time, allowing unpleasant persons to locate the profile of, say, a lone female runner, then find out exactly where they are in real time.

We reported it to Runtastic privately in early 2015. The finding wasn’t acknowledged and we received a very generic response about privacy settings that didn’t address the issue at all. As the vulnerability wasn’t fixed at the time, we decided not to publish, so as not to expose lone runners to attacks.

The attack

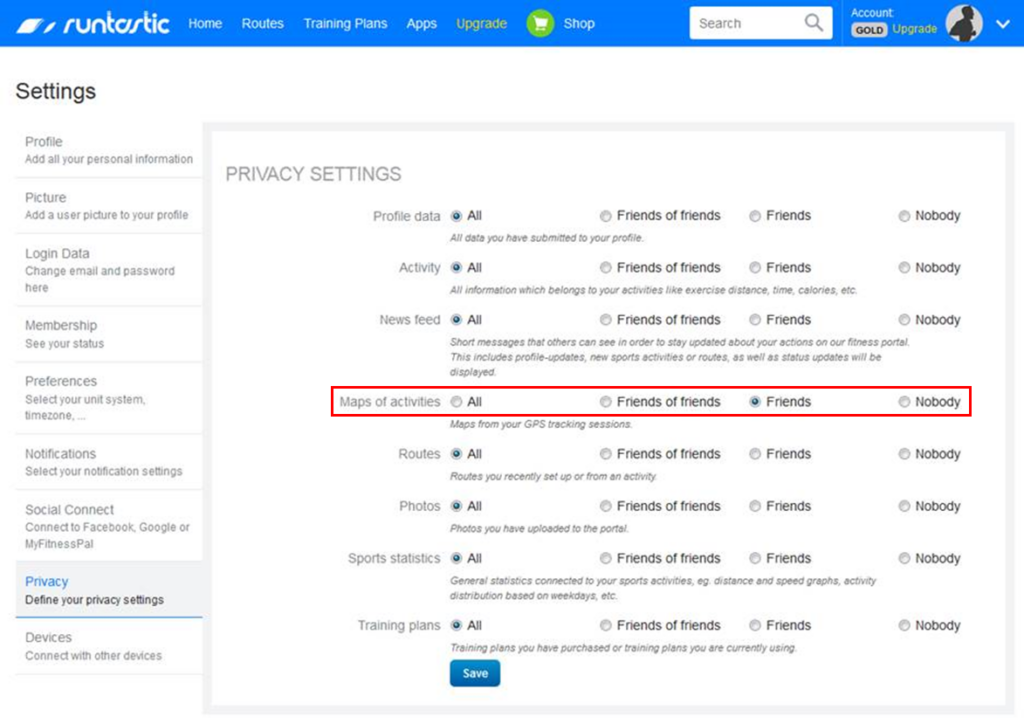

As part of a review of numerous run tracking apps (including Strava) we looked at the privacy settings of Runtastic. First, we set the privacy of ‘maps of activities’ to ‘friends’. Personally, I would set it to ‘nobody’ but I understand why running friends might want to track each other in real time, perhaps for safety or training purposes.

The setting only prevented an unauthenticated user from seeing run maps AFTER they had completed the run. DURING a run, we could see the runners location in real time, even though we hadn’t logged in to the app and were not a ‘friend’ of the runner.

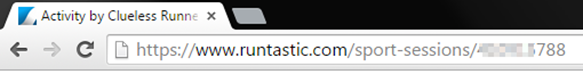

When a run is started, a new session is allocated sequentially:

It’s trivially easy to identify the session through iteration. If the run was still in progress, then with no further effort one could see the runner in real time.

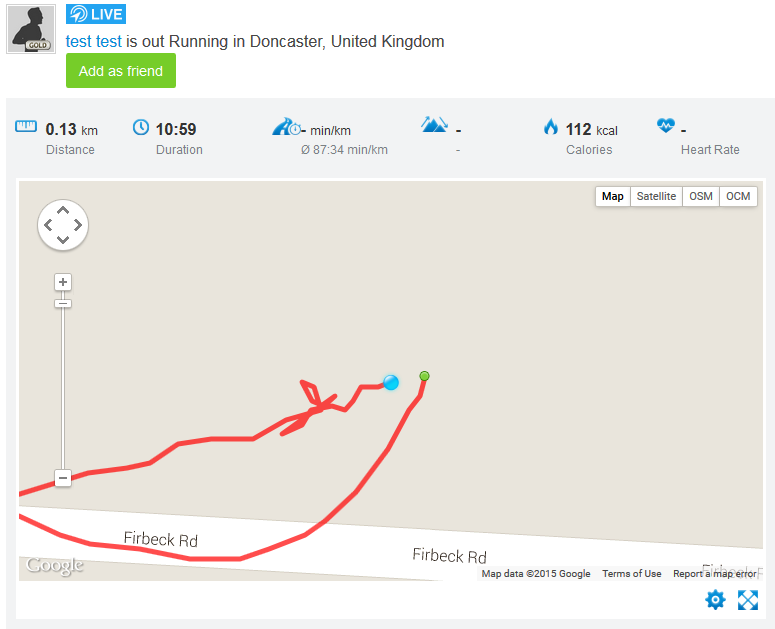

Here’s one of us, identified through this method. The run starts and stops at their house too:

Only after completion of the run was the map privacy setting effective!

We can also see the runners profile picture and account name, which is often the runner’s real name.

We should NOT be able to see this information; it was accessible during a run EVEN if we set the relevant privacy settings to prevent profile data being displayed.

Clearly, this was a significant privacy balls-up; exposing data and location information that was supposed to be private.

Disclosure

We decided this was quite a serious issue, so reported it privately to Runtastic to avoid exposing lone runners.

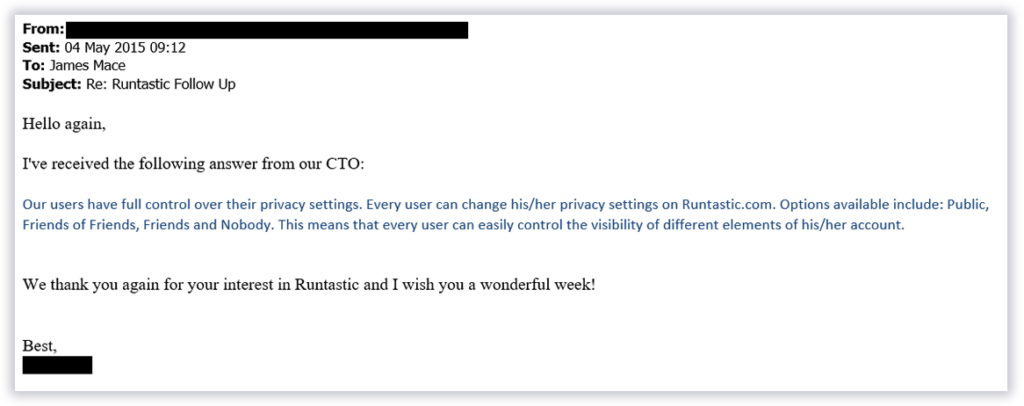

After one email which wasn’t responded to, we tried again and finally got a ‘holding’ response. A week later we received the following:

Which didn’t address the problem at all. We were in an ethical dilemma at the time: vendor won’t resolve the problem but we didn’t want to expose runners. We decided not to disclose.

However

The bug was quietly fixed in at the end of 2015, though we were not notified and only realised when the technique no longer worked. Hence we published. We have logs, photographic and video evidence to prove it.

Food for thought

We believe that Runtastic was sold to Adidas in the time between us privately disclosing the vulnerability and it finally being fixed.

I wonder if the vulnerability made the due diligence pack?