In my experience most vulnerabilities appear to be the result of a degradation in security over time. There are other influences in play of course, but as a headline that is pretty interesting, no?

Also, whilst vulnerabilities come in all shapes and sizes, the fixes for them are often very similar.

Based on over a decade’s worth of testing and consulting I would say that almost all vulnerabilities I see on networks and applications are there because of the following five things:

- The operating system hasn’t been patched and it’s now vulnerable to various security issues.

- That third party piece of software is now out of date and vulnerable to issues that have been fixed in later versions. It has likely installed a bunch of insecure dependencies for itself as well.

- That third party piece of software was vulnerable from the day it was installed due to default credentials, poorly protected admin interfaces or other poor programming choices.

- A security issue was introduced by setting a weak password, opening up a service or changing a setting with adverse effects.

- Custom web application code contains flaws and doesn’t work in quite the way you’d expect it to.

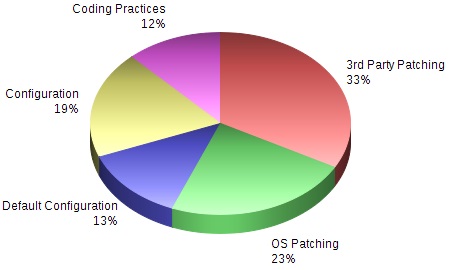

What’s interesting about these things is the distribution, or the weighting. I decided to do some number crunching and went through the top 250 high risk vulnerabilities, to categorise them according to those 5 reasons. This is what I found…

- 69% of the security issues were caused by inaction on the part of the admin/owner (3rd Party Patching 33%, OS Patching 23%, Default Configuration 13%). Basically, security issues have multiplied due to a lack of human intervention. All software becomes increasingly insecure over time because hackers, pen testers, and developers constantly find issues, and fix them.

- 19% of issues were caused by a misconfiguration or weak password and were a direct result of admin/owner actions.

- 12% were due to web applications containing custom code that contained insecurities.

It is important to mention that the 12% of issues caused by web applications (coding practices) may seem a small number BUT consider this; we tend to test large quantities of IP addresses on infrastructure tests whereas a web application test generally consists of just one or two distinct web applications, and they are generally Internet facing. Simply put, it carries a higher risk.

So, what does this mean?

…well, nearly 70% of issues seen were caused by a lack of action (patches not being applied, software not being updated etc). Why does this not get done? It comes down to time. Patching takes time, upgrades take time and both tend to involve the dreaded downtime.

OS patching seems to be gaining traction and we are seeing fewer missing patches, but third party software seems to be largely forgotten once installed, and the accompanying auto updates that often install supporting software that can go under the patching radar.

Whilst education and training is important when developing secure code (and not introducing security issues), the vast majority of issues seen could be fixed by keeping the systems in place up to date and secure.

Your technical team is not idle (I hope) but the boring task of patching and upgrading tends to be bottom of the list, and as a result security suffers.

So, check your patch policy, ensure it includes reviews of possible third party software, and come up with a workable and timely plan to keep systems up to date. By doing this you’ll fix nearly three quarters of your issues.

Remove all instances of default passwords, the usual suspects like password1, admin1234 etc. from the network and you’re even closer to that clean bill of health.