TL;DR

- Digital Forensics and Incident Response (DFIR) is about judgement, not just tools

- The difference between evidence and noise is context

- AI can support DFIR investigations, but it cannot replace human reasoning

Introduction

In my previous blog post, I wrote about finding your path into DFIR; how to get started, where to focus your time, and why curiosity and good fundamentals matter more than just chasing every certification.

That is still true, but once you have been in the field for a while, you start to realise that getting into DFIR is only one part of it. The next step, and probably the harder one, is learning how to think like an investigator. For me, that is where the job really starts to get interesting.

DFIR is about judgement, not just tools

DFIR is not just about knowing where artefacts live or being able to run a collection and generate a timeline. Those things matter, but they do not make an investigation on their own. What matters is how you approach the evidence in front of you, how you decide what is important, what is just noise, and how you build a logical picture of what actually happened.

When you first get into DFIR, there is a natural tendency to focus on the technical side of it. You learn what specific artefacts mean, you learn how to use forensic tools, you get excited when you find something interesting. But at some point, you have to move beyond simply finding things and start asking whether they matter.

How investigators separate signal from noise

Modern investigations are noisy. Endpoints are noisy, cloud environments are noisy, users are noisy, and security tooling adds its own layer of noise on top of that. There is background activity everywhere, and you can spend hours looking at something that is interesting but completely irrelevant to the incident you are meant to be dealing with.

The real skill is knowing what matters right now. That is just as true in incident response as it is in forensics. Clients do not care how many artefacts you know about, they want to know whether the attacker is still there, how they got in, what they touched, and what needs to happen next.

To answer that properly, you need more than technical knowledge. You need context and judgement, something that can only come from us, as human investigators. That is why an investigative mindset matters so much. It’s what stops you treating every oddity as equally important. It is what makes you ask better questions than “what have I found?” and instead ask “what am I actually trying to prove or disprove here?”

That might be whether a user opened the attachment they insist they never touched. It might be whether an attacker had hands-on-keyboard access or whether a compromise was automated. It might be whether data was staged before it left the environment, or whether a suspicious logon was actually suspicious at all once you put it into context.

Context is what makes the evidence useful

A single artefact on its own is rarely enough. A process execution, a timestamp, a file write, or a logon event can all be useful but they only really start to mean something when you look at what happened around them. What came before it? What followed it? Who was the user? Was there network activity? Does it line up with the other evidence, or does it point somewhere else entirely?

That is where the investigation lives, not in isolated artefacts, but in the relationships between them.

One of the easiest traps to fall into in a DFIR investigation is giving too much weight to the first thing that looks suspicious. A PowerShell command with an encoded string, an odd scheduled task, a binary dropped into a temp folder, or a login at an unusual time can all look like the start of the story when you first find them. Quite often, though, they are not the story at all. In isolation, they look important because they resemble the kind of activity we are trained to look for, but once you widen the view and start looking at the surrounding context, they can turn out to be completely benign.

Placing the suspect behind the keyboard

It is also why I have always liked the idea of placing the suspect behind the keyboard. Some of my interest in that kind of investigative judgement comes from Brett Shavers’ work in the field, where he has consistently pushed the idea that good investigations are not just about collecting artefacts, but about understanding the person or thing and the behaviour behind them or it.

At the end of the day, we are not just collecting data, we are trying to understand actions. Someone logged on, someone ran that command, someone accessed that mailbox, someone moved that data. Even where malware is involved, there is usually still a human decision-making process somewhere in the chain, and the closer you can get to understanding that, the better your investigation will be.

AI can assist, but it cannot replace judgement

I think this has become even more important with the introduction of AI.

AI will absolutely have a place in DFIR, and in many ways it already does. It can help making some of the repetitive parts of the job quicker, but none of that replaces investigative judgement.

Attackers will use it as well, whether that is to tidy up phishing lures, speed up development, or lower the barrier for entry.

AI can help you, but it cannot truly understand the nuance of a case in the way an investigator can. It does not know the environment like you do once you have spent time in it. It cannot properly weigh up competing explanations, spot when something does not quite fit, or decide how much confidence to place in a conclusion when the evidence is messy. It can give you something that sounds plausible, but in this field, plausible is not the same as right.

DFIR is not just looking at data, It is using reasoning, using judgement. It is knowing when the evidence is strong, when it is weak, and when the most honest answer is that you cannot say for certain.

If anything, the more AI gets folded into both attack chains and defensive workflows, the more important it becomes for investigators to have strong fundamentals and good judgement. There will be more output, more noise, more automation, and probably more confidence in conclusions that have not been challenged properly.

AI has a habit of making statements confidently, based on patterns it has seen before, very much dependent on the prompts used. We need AI to help point us at interesting artefacts and behavioural data, but cannot rely on it to investigate or provide the level of judgement that an experienced human can.

There are already some strong discussions in the DFIR community around AI and its impact. A couple of recent articles and videos worth looking at are below:

13Cubed – The AI Conversation I’ve Been Avoiding

Brett Shavers – AI Won’t Replace DFIR Investigators. But It Will Replace Those Who Don’t Investigate

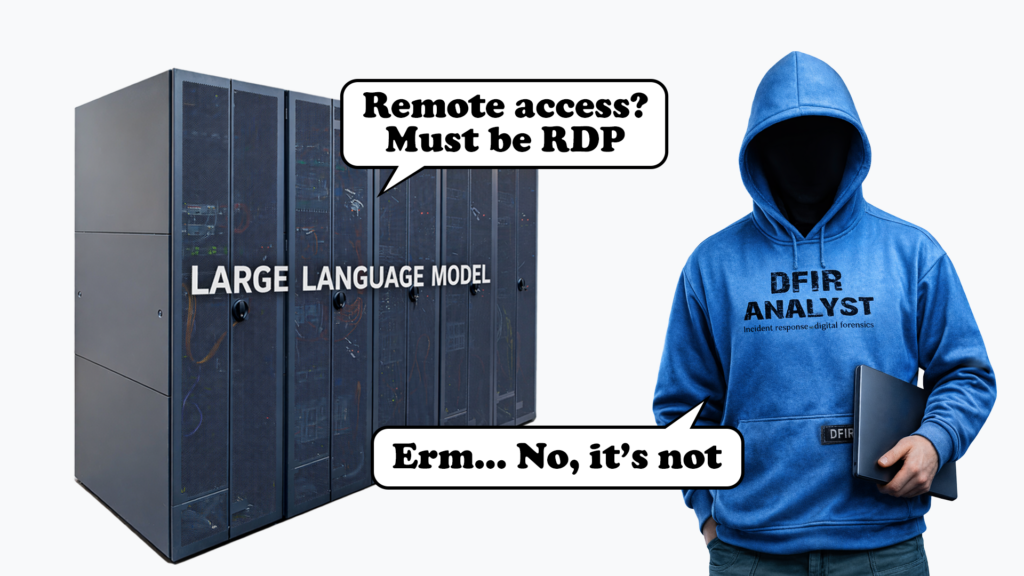

AI vs Investigator

To evidence hallucination from AI and show why human judgement is a requirement, I have downloaded event logs from a DFIR challenge named ‘Silence of the RAM’ designed by Tushar Arora. It is a scenario focused exercise where event logs are analysed – the CTF was inspired by the EDR Freeze vulnerability.

I chose this exercise because it contains artefacts that AI can look at and get the wrong idea, i.e. PowerShell, service changes, browser downloads, and suspicious binaries. The LLM confidently claimed there was no meaningful persistence, suggested RDP was the likely remote access path, treated the encoded PowerShell as administrative noise, and misidentified binaries and processes.

Below shows what the evidence showed upon analysis, and what the LLM had to say:

| What the evidence showed | What the AI model said |

| The initial payload was AdobeFlashUpdater.exe | The user downloaded a generic browser update |

| Cyserver.exe crashed, and created a monitoring blackout | Defender or a random user process likely crashed due to instability |

| OpenSSH was enabled for persistence and re-entry | SSH-related commands were benign, and RDP was likely the access method |

| The attacker created servicemgmt and added it to Administrators | The account was likely built in, or a service account with no real significance |

The LLM looks at things like remote access and assumes RDP, it sees a new account and treats it as routine. It sees a browser process and decides that is a key part of the compromise.

A human investigator with judgement and context works differently and they ask what the evidence supports. We might look at the sequence of events, the timing, the context surrounding those artefacts. The human investigator might see the privileged user account and dig deeper, they see that OpenSSH was enabled and ask themselves why.

That is why AI can help in DFIR, but it cannot replace an investigators judgement. It can point you in a direction, which could throw you down the complete wrong path, and you might then follow further to validate what the model is confidently telling you.

What it can’t do is reliably decide what artefacts mean in context, or whether the story it is telling matches the evidence at all.

Learning to think like an investigator

Once you are in DFIR, the real development comes from building that investigative mindset. The ability to pick the right clue, ignore the noise that does not matter, challenge your own assumptions, and keep sight of the human activity behind the evidence you are looking at.

Anyone can learn to run a tool but the harder part, and the bit that really makes the difference over time, is learning how to think like an investigator.